With the announcement of the 2018 FMPP/LFPP RFA this week – tucked into the Specialty Crop Block Grant announcement- I wanted to alert you to this 2017 post below about the indicators that are included in the proposal.

There is also a shorter version on FMC’s website. Here is the link to it. )

Congratulations to everyone who got their FMPP/LFPP grants in by the deadline yesterday. I talked or emailed with a few of you throughout that process and was impressed by the well-crafted strategies that I read and heard about.

As you can imagine, a lot of the calls I was on focused on the new prescribed indicators (performance/outcome measures) that were included with the RFP for the first time. Those were the same for FMPP as for LFPP projects and were:

OUTCOME 1: TO INCREASE CONSUMPTION OF AND ACCESS TO LOCALLY AND REGIONALLY PRODUCED AGRICULTURAL PRODUCTS.

Indicators 1. Of the [insert total number of] consumers, farm and ranch operations, or wholesale buyers reached, a. The number that gained knowledge on how to buy or sell local/regional food OR aggregate, store, produce, and/or distribute local/regional food b. The number that reported an intention to buy or sell local/regional food OR aggregate, store, produce, and/or distribute local/regional food c. The number that reported buying, selling, consuming more or supporting the consumption of local/regional food that they aggregate, store, produce, and/or distribute

2. Of the [insert total number of] individuals (culinary professionals, institutional kitchens, entrepreneurs such as kitchen incubators/shared-use kitchens, etc.) reached, a. The number that gained knowledge on how to access, produce, prepare, and/or preserve locally and regionally produced agricultural products b. The number that reported an intention to access, produce, prepare, and/or preserve locally and regionally produced agricultural products c. The number that reported supplementing their diets with locally and regionally produced agricultural products that they produced, prepared, preserved, and/or obtained

OUTCOME 2: INCREASE SALES AND CUSTOMERS OF LOCAL AND REGIONAL AGRICULTURAL PRODUCTS.

Indicator 1. Sales increased from $________ to $_________ and by ______ percent ( n final – n initial/n initial (100) =% change), as result of marketing and/or promotion activities during the project performance period. 14 | Page 2. Customer counts increased from [insert total number of] to [insert total number of] customers and by _____percent ( n final – n initial/n initial (100) =% change) during the project performance period.

OUTCOME 3: DEVELOP NEW MARKET OPPORTUNITIES FOR FARM AND RANCH OPERATIONS SERVING LOCAL MARKETS.

Indicators 1. Number of new and/or existing delivery systems/access points of those reached that expanded and/or improved offerings of: a. ______farmers markets. b. ______roadside stands. c. ______community supported agriculture programs. d. ______agritourism activities. e. ______other direct producer-to-consumer market opportunities. f. ______local and regional Food Business Enterprises that process, aggregate, distribute, or store locally and regionally produced agricultural products. 2. Number of local and regional farmers and ranchers, processors, aggregators, and/or distributors that reported: a. an increase in revenue expressed in dollars: _____ b. a gained knowledge about new market opportunities through technical assistance and education programs: ______

3. Number of: a. new rural/urban careers created (Difference between “jobs” and “careers”: jobs are net gain of paid employment; new businesses created or adopted can indicate new careers): _______ b. jobs maintained/created:_______ c. new beginning farmers who went into local/regional food production: _____ d. socially disadvantaged famers who went into local/regional food production: ______ e. business plans developed:____

OUTCOME 4: IMPROVE THE FOOD SAFETY OF LOCALLY AND REGIONALLY PRODUCED AGRICULTURAL PRODUCTS.

Indicator(s) – Only applicable to projects focused on food safety. 1. Number of individuals who learned about prevention, detection, control, and intervention through food safety practices:_____ 2. Number of those individuals who reported increasing their food safety skills and knowledge:______ 3. Number of growers or producers who obtained on-farm food safety certifications (such as Good Agricultural Practices or Good Handling Practices): _____

The applicant is also required to develop at least one project-specific outcome(s) and indicator(s) in the Project Narrative and must explain how data will be collected to report on each applicable outcome and indicator.

These confounded many, while others knew exactly how to use these to define their grant’s outcomes. I hope that USDA calls in some of those who do a bang up job in setting and achieving their numbers to talk with the newbies in future years.

Because of the previous work on the trans•act tools (which include the SEED tool) while at Market Umbrella, and the more recent and engrossing Farmers Market Metrics (FMM) work I have been doing with FMC and their partners these last few years, I have become very familiar with this language and these indicators. Most are included in the metrics chosen by FMC to be collected starting in 2016 with FMM through their own projects and through offering support to networks that area ready to embed evaluation systems in their projects.

Since I spent some time working with various project leaders on this, I thought I’d give my two cents here as to how I’d approach these if I was the lead.In this post, I’m going to talk about my general theory of data at the grassroots level and the first two outcomes; I’ll tackle #3 and #4 and unique indicators in upcoming posts.

Some may disagree with my assessment of how to handle these indicators which to me is actually a good thing since by tackling this in varying ways, we are likely to hit on the best methods of establishing these baseline numbers and for collecting the data.

The first thing that confounded some proposal writers is how every indicator could be met by the varied projects: of course, they cannot and are not expected to. Since some projects are focused only on increasing sales at a market and not on increasing the number of outlets, some indicators are more relevant than others and should be used in more detail. Remember, these indicators are for both FMPP and LFPP projects which covers a wide spectrum and so are meant to support the general outcomes for all. It is my opinion that the unique indicators asked for at the end are likely to be the most useful for reviewers to read closely in order to match to the narrative or budget. I’d expect though that those proposals that could not reasonably answer a majority of the indicators with numbers will suffer in that reviewing process, as did USDA it seems, as they recommended in their webinar that everyone explain those that they couldn’t answer. Or if possible, add a piece to their project to address that indicator. And I think you can assume that USDA was being firm in saying that this pot of money should result in changes of these kinds, so if your project cannot reasonably do any of them, maybe look elsewhere for support.

I think the best way to really make these outcomes accurate is for the project lead to write them with the vision of using them as a banner to fly throughout the term of the project for the team to hit, surpass or to discuss why they cannot be met and what that means. And that the numbers should be slightly lofty-it is better to extend the reach at the outset and urge the team to do their best work to reach or even surpass it. However, don’t just throw some outrageous numbers in there or you will be telling the reviewers and your team that you have no intention of achieving them. So even though I used the word lofty, there is something in being efficient with your project through establishing very precise numbers too.

Efficiency is a good plan for our tiny organizations in order to conserve ours and our vendors’ energy for the long haul and to be there for another day. And that how well we plan and how we address our assumptions about those we hope to reach has a lot to do with setting numbers and meeting or achieving them.

Okay let’s look at the first two outcomes now:

Outcome 1: Increase consumption and access.

The indicators that are clustered with this outcome are related, meaning that once you have established the (a) the number of buyers and or producers that gained knowledge, you can then estimate the number (b) of those that then report an intention and then finally, the number (c) that reported actually buying, selling, aggregating etc. The second part of this outcome is related to those professionals like chefs or incubator-users who, if the project is expecting to reach that audience, then they are also going to be measured for knowledge, intention and actual activity.

I think this one was written out particularly well done as it takes a project step by step through the process of establishing their reach. This should have been relatively easy for most projects, as knowing how many people you plan on reaching is sort of 101 for FMPP or any USDA grant!

USDA’s suggestion was to write them out in a mathematical formula writing a beginning number, then the number you want to hit and then calculating the percentage of increase. It may be helpful to do that in 2 columns and consider both the direct and indirect ways that your project will reach people. Certainly, if you are doing training or workshops you can estimate your attendance, but how about those who just read about your training or workshop and track down the info that way? How about through the media that your project uses to gain attendees? Is it reasonable to think that others will hear about the market or outlet and begin to attend because of it? And never forget the vendors and including them into any project outcome, even if it is a straight up new shopper project; the vendors also can learn about the marketing and use it in their own sales reach if it is shared properly. And of course, how about the project partners and their reach?

Once you set the number who will gain knowledge (and I think that your project should plan that just about everyone that gets your materials or attends your workshop will gain knowledge) you then think about who will change their behavior because of it. I wonder if I had a group of market managers and a group of vendors in one room and asked them to gauge that if 1,000 people are reached through materials or training, how many they think will actually intend to use it, and then how many will actually use that knowledge to buy, sell aggregate etc what differences we’d see. Because that estimate can vary, based on the perspective and experience of those setting the number.

My feeling would be that the vendors would assume that more people will intend to come but would think that less will actually buy. I say that because they deal with everyone directly and know painfully well how many pass by their table without eye contact or a deep perusal of what is for sale. So they know firsthand how getting people to actually do something is hard. I’d say that managers would be more likely to think more people will be reached but that less would report an intention to come to a market, but that once they are there, that a higher percentage will purchase. My assumption may be entirely wrong and maybe someday I can test it and readjust it. The most important thing is to test your project assumptions by asking everyone for numbers and adjusting them accordingly to their bias and experience and according to your plan.

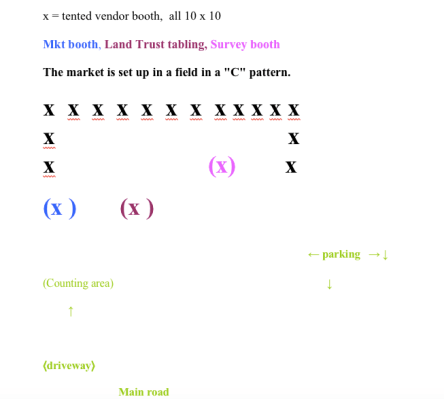

I also think percentages without numbers can be difficult to be realistic about, so I often suggest that people start on the wrong end: if the project is for increasing shoppers to a single market, how many more shoppers could that market actually handle per week? 100? 200? 1000? Think about the vendors and your space and your Welcome Booth and visualize adding that number every week. Would it overwhelm the market? Do you have enough parking or access to transportation to make it happen? How many added shoppers per hour would that mean to your anchor vendors? Is that worth it?

Remember that the average shopper in most markets spends between 10-30 dollars so using those numbers above, the market would add another $1000 -$30,000 week in sales. Pretty cool huh? Or if you hope to add another market day: Maybe your Saturday market has 45 vendors on average, you might estimate that since your new market is smaller and has less parking, that you hope 25 or so can use this new outlet. In both cases, your initial outreach has to be wider than the final number, as some will not get to your market or have the ability to add market days even when told of the opportunity.

Outcome 2: To increase sales

Couldn’t be simpler as, in most cases, FMPP projects are still chiefly attempting to increase sales. It may be true that at some later date, sales increases are not the primary indicator of the success of our work, but with the small reach that alternative food outlets currently have with food shoppers, I agree that this should still be the main goal. Even so, this indicator stymied more people (and I would imagine contributed to some not writing a grant at all) and since it is a common metric for FMM, I’m going to attempt to reason why it is necessary and how we can capture this.

Measuring an increase of sales for a project that is going to do marketing or outreach for a single sales outlet is pretty standard. The issue is that you need a baseline number (starting point) and that is the thing many markets do not have yet. So how do you find the baseline?

Everyone knows that the majority of markets ask for standard stall fees which are not based on vendors’ sales percentages and because of that, many markets have never asked for sales data from their vendors*. What USDA, FMM, Wholesome Wave and others are now saying is that we need to know the impact of our work whether you collect this data for the market’s fee rates or not. So, for those who do already collect it, you are ahead of the curve and probably have a lot to teach the rest of us about how to do it well.

So how do the rest of us do it? Well, the simplest way is to ask vendors directly, either every market day, every month or every season. As you can imagine, the longer you wait to ask this, the more difficult it becomes for the vendor to separate the numbers from your market from the other outlets he/she sells at. However, it also is difficult for multi-tasking vendors to stop at the end of the day to count their money and get that number to you. So what works best? My answer is one that some people hate hearing: whatever works best for your community and your management level is what works best- as long as it gives you accurate data in increments acceptable to those using it.

I’ll talk your ear off about accurate data whenever discussing market evaluation because it is my experience that markets rely too much on anecdotal information and estimates that probably are better described as guesstimates as they have almost no basis in real numbers. I can hear many of you yelling at me through your computer that you are not evaluators and cannot be expected to gather data. My answer to that is as soon as you create projects that use the resources of partners and promise your community some change in behavior because of these efforts, you are both. Meaning as soon as you decided to run a market. (You like how I run the entire argument on my own and that I get the last word?)

However, I am in agreement with many market leaders and vendors that too much data is often asked of markets or vendors that is never used or not shared back with those who offered it. And of course, that collecting the data and the costs associated are almost never added to the cost of any project, and usually, partners just assume that overworked market communities will just throw that added work in their long list and get it to them toot sweet.

Yeah, don’t get me started on data collection challenges here.

Additionally, sales data is at the top of the sensitive information asked presently and I often ask managers or market partners to tell me how much is in their bank account right now as an example of how asking for information without context or reason is alarming to say the least. That is, if you even know a precise number! So I say first be the change you want to see by sharing market data with vendors regularly: token sales for debit are going up but SNAP is steady? What do you think that means? And then ask them what they think it means.

Asking for it in anonymous sales slips is the way FMM suggests it is collected, but I assume that there are other good methods to test. And that it helps all of those methods when the raw data is shared with the vendors and it is used to advocate for their needs. It must be said that to be able to use it in aggregate means it has to be collected in the same way for the same time period and a lot more data is needed to get to any collective contribution, so we do need to hit upon some common methods sooner rather than later. Here are two more possibilities:

And as many of you know, the SEED tool asks shoppers to estimate their purchases and then calculates overall sales from those numbers. Many feel this method of getting sales is better, but it does require more surveying of shoppers more often which means added staff and volunteers.

Another way may come as some markets grow their token systems. Depending on your market, it might be possible to estimate how many of your shoppers use that system and whether it is representative of the type of overall shopper you have and use the data to estimate sales.

The main point is we have to agree that we need some data and it should be as precise as possible without violating privacy or exposing weaknesses in one business over another- after all, this is a competitive place. The data you can use for internal analysis as to the market’s impact on its vendors and shoppers can be a lot less and a lot less specific than the data your research partners will need when they start to calculate economic numbers. And that until you have actual data, how you calculated your starting point for these indicators says a lot about your circle of advisors, your experience and your knowledge of the target population.

Whew; enough for now. I’d love to hear how some of you did calculate both of these outcomes and especially sales, both in systems you had baselines and ones that did not. I expect that some of you will disagree with much of my unscientific approach to measurement but hope you know that I welcome your opinions.